Brain-like “Memtransistor” takes Neuromorphic Computing to Next Level

Advances in machine learning have moved at a gallop in recent years, but the computer processors these programs run on have barely changed. Traditional CPUs process instructions based on “clocked time” – information is transmitted at regular intervals, as if managed by a metronome.

But now, a new cutting-edge approach is taking shape: “neuromorphic computing,” by packing in digital equivalents of neurons, neuromorphics communicate in parallel (and without the rigidity of clocked time) using “spikes” – bursts of electric current that can be sent whenever needed. Just like our own brains, the chip’s neurons communicate by processing incoming flows of electricity – each neuron able to determine from the incoming spike whether to send current out to the next neuron.

What makes this a big deal is that these chips require far less power to process AI algorithms. Application of neuromorphic semiconductors could prove extremely useful in areas including pattern recognition on a massive scale.

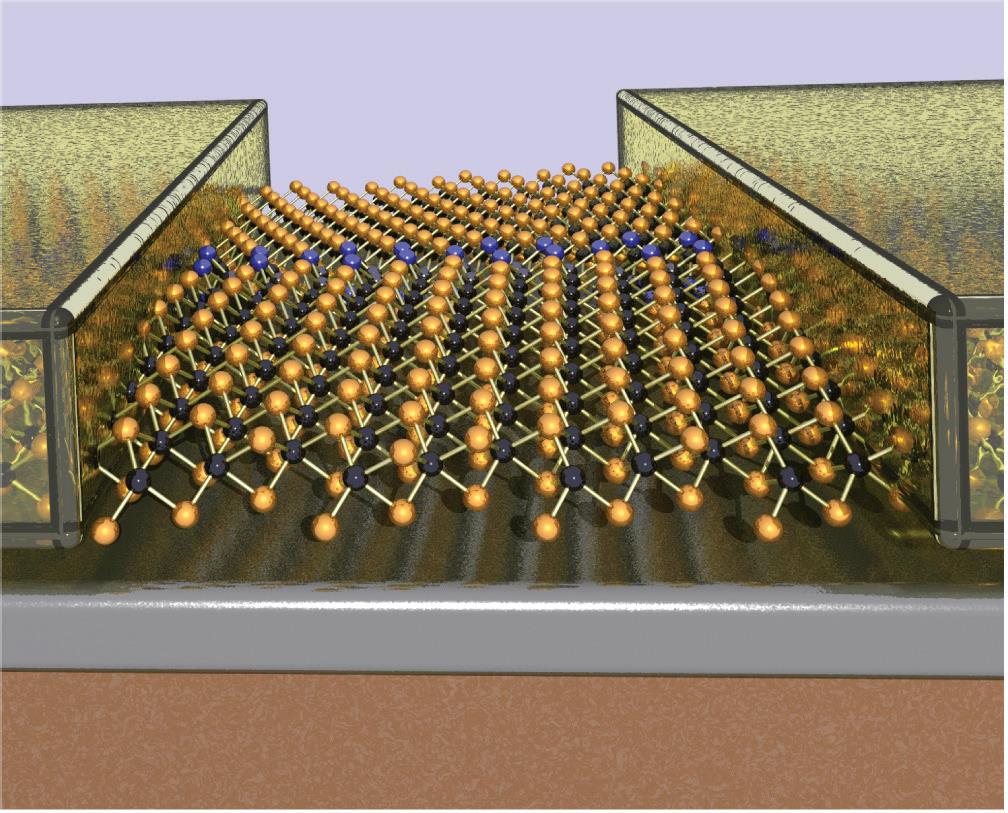

Now, a new research has been able to realise this dream by building what is called the “memtransistor,” which is a multi-terminal device made from a single layer of the semiconductor molybdenum disulfide (MoS2). The device operates much like a neuron by performing both memory and information processing. With combined characteristics of a memristor and transistor, the memtransistor also encompasses multiple terminals that operate more similarly to a neural network.

This most recent research builds on work that Mark C. Hersam, the co-author of the present study and his team conducted back in 2015 in which the researchers developed a three-terminal, gate-tunable memristor that operated like a kind of synapse.

While this work was recognized as mimicking the low-power computing of the human brain, critics didn’t really believe that it was acting like a neuron since it could only transmit a signal from one artificial neuron to another. This was far short of a human brain that is capable of making tens of thousands of such connections.

“Traditional memristors are two-terminal devices, whereas our memtransistors combine the non-volatility of a two-terminal memristor with the gate-tunability of a three-terminal transistor,” said Hersam to IEEE Spectrum. “Our device design accommodates additional terminals, which mimic the multiple synapses in neurons.”

Atomically thin MoS2 with well-defined grain boundaries influence the flow of current. Similar to the way fibers are arranged in wood, atoms are arranged into ordered domains — called “grains” — within a material. When a large voltage is applied, the grain boundaries facilitate atomic motion, causing a change in resistance.

“Because molybdenum disulfide is atomically thin, it is easily influenced by applied electric fields. This property allows us to make a transistor. The memristor characteristics come from the fact that the defects in the material are relatively mobile, especially in the presence of grain boundaries,” Hersam explained.

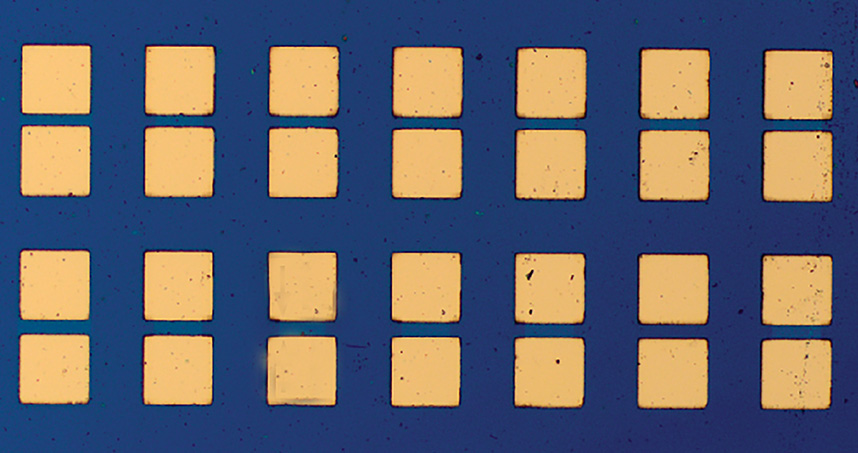

“When the length of the device is larger than the individual grain size, you are guaranteed to have grain boundaries in every device across the wafer. Thus, we see reproducible, gate-tunable memristive responses across large arrays of devices.”

“This is even more similar to neurons in the brain,” Hersam said, “because in the brain, we don’t usually have one neuron connected to only one other neuron. Instead, one neuron is connected to multiple other neurons to form a network. Our device structure allows multiple contacts, which is similar to the multiple synapses in neurons.”

Next, Hersam and his team are working to make the memtransistor faster and smaller. Hersam also plans to continue scaling up the device for manufacturing purposes.

“We believe that the memtransistor can be a foundational circuit element for new forms of neuromorphic computing,” he said. “However, making dozens of devices, as we have done in our paper, is different than making a billion, which is done with conventional transistor technology today. Thus far, we do not see any fundamental barriers that will prevent further scale up of our approach.”